JavaScript has a lot of use cases - one of them is automated browser detection. This is a technical talk overviewing the state of the art automated browser for ad fraud, how it cheats many bot detection solutions, and the unique methods that have been used to identify it anyway.

Finding Stealthy Bots in Javascript Hide and Seek

FAQ

There are both beneficial and malicious bots on the web. Beneficial bots include those used for testing, while malicious bots can perform activities like ad fraud, social media manipulation, and denial-of-service attacks.

Basic methods for detecting bots include analyzing the user agent string, checking for the absence of JavaScript execution, and observing if a bot can handle complex behaviors like running JavaScript or generating tokens that mimic human interactions.

Browser quirks are inconsistencies or unique behaviors in how different browsers operate. These can be used to detect bots because while bots can mimic many human behaviors, replicating these specific quirks accurately can be more challenging.

Automated browsers have significantly advanced bot capabilities by allowing bots to emulate human browsing more convincingly. These tools can run a DOM, execute JavaScript, and fake user interactions, making bots harder to detect.

Puppeteer is an automated browser developed by Google that is particularly adept at mimicking human browser interactions without being easily detected. It supports extensions and modifications that can enhance bot operations, making it a key tool in sophisticated botting activities.

Advanced bots have evolved to bypass CAPTCHAs by employing techniques that mimic human responses or by using machine learning algorithms to solve CAPTCHA challenges, effectively diminishing the effectiveness of CAPTCHAs as a standalone deterrent.

Emerging techniques for detecting sophisticated bots include analyzing inconsistencies in browser fingerprinting, using behavioral analysis to detect unnatural patterns of interaction, and monitoring session level data for anomalies.

As bot technology evolves, bots are becoming better at mimicking human behaviors and browser characteristics, making it increasingly difficult to distinguish them from real human users without advanced detection techniques.

Video Summary and Transcription

The Talk discusses the challenges of detecting and combating bots on the web. It explores various techniques such as user agent detection, tokens, JavaScript behavior, and cache analysis. The evolution of bots and the advancements in automated browsers have made them more flexible and harder to detect. The Talk also highlights the use of canvas fingerprinting and the need for smart people to combat the evolving bot problem.

1. Introduction to Web Bots

I'm here to ask what's going on with bots on the web. We'll talk about simple detections, how the bots got better. We'll talk about what's possibly the best bot out there cheating on most detection solutions. And we'll lastly get to my favorite part, which is how you can find it anyways. My job is playing hide and seek with these bots, so advertisers can avoid them. It's going to be social media, concert ticket sellers, a lot of people facing this issue because the internet was not designed with bot detection in mind. When you do that, yeah, real story, when I was 16, high school product projects may or may have not dropped service to some site. So to make the internet better, we want to detect them. Let's talk detections. Starting with the basics. User agent. Does the HTTP request header identifying the browser? You guys know this. You see it's a Python bot. You block that. Probably not a real user behind that. They figured this out, the bot makers know, they hide the user agent. Let's say you don't run JavaScript on your bot.

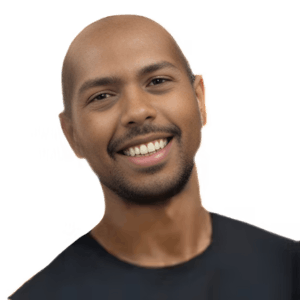

Hey, everyone. I'm Adam. I'm super happy to be here, and I'm here to ask what's going on with bots on the web. I'm not talking about the nice ones, the testing. I'm talking about the bad ones. We'll talk about simple detections, how the bots got better. We'll talk about what's possibly the best bot out there cheating on most detection solutions. And we'll lastly get to my favorite part, which is how you can find it anyways.

But before all that, one reason I'm here is because I always like packing stuff, and now I'm the reverse engineer for DoubleVerify. They measure ads. But my job is playing hide and seek with these bots, so advertisers can avoid them. But it's not just advertisers and the games. It's going to be social media, concert ticket sellers, a lot of people facing this issue because the internet was not designed with bot detection in mind. Seriously. The only real standard is bots.txt telling bots what they're allowed and disallowed to do. Basically the honor system asking good people to play nice. When you do that, yeah, real story, when I was 16, high school product projects may or may have not dropped service to some site. But some people actually do this on purpose and at scale, denying service to real users, using what they have to steal, sneakers, sneaking around social media with fake users. I practice that part. So to make the internet better, we want to detect them.

Let's talk detections. Starting with the basics. Not because bot makers can't play around these, but because they're usually the first thing you rely on when you come up with something more complicated because simple detections are pretty straightforward. User agent. Does the HTTP request header identifying the browser? You guys know this. You see it's a Python bot. You block that. Probably not a real user behind that. They figured this out, the bot makers know, they hide the user agent. Let's say you don't run JavaScript on your bot.

2. Detecting Bots with Tokens and JavaScript

You can use tokens and JavaScript behavior to detect bots on your site. Browser quirks can be used to verify the true nature of a browser. Digging deep into JavaScript can reveal attempts to hide something.

Maybe you make a token as the detection as the site. In Azure, actually make sure it's created. So if you have a bot that's navigating to your site, not generating this token, not running JavaScript, you know something's going wrong. But let's say they do run JavaScript. All of a sudden, you can check how the browser behaves. You people probably hate browser quirks. Bot makers hate them too, because they can be used to verify what's under the hood and not what the browser is reporting at face value. And sometimes you can dig deep in JavaScript to see if somebody's trying to hide something.

Check out more articles and videos

We constantly think of articles and videos that might spark Git people interest / skill us up or help building a stellar career

Workshops on related topic

While addressing security aspects and avoiding common pitfalls, we will enhance a full-stack JS application (Node.js backend + React frontend) to authenticate users with OAuth (social login) and One Time Passwords (email), including:- User authentication - Managing user interactions, returning session / refresh JWTs- Session management and validation - Storing the session securely for subsequent client requests, validating / refreshing sessions- Basic Authorization - extracting and validating claims from the session token JWT and handling authorization in backend flows

At the end of the workshop, we will also touch other approaches of authentication implementation with Descope - using frontend or backend SDKs.

Table of contents:

1 - The infamous "N+1" problem: Jonathan Baker - Let's talk about what it is, why it is a problem, and how Shopify handles it at scale across several GraphQL APIs.

2 - Contextualizing GraphQL APIs: Alex Ackerman - How and why we decided to use directives. I’ll share what directives are, which directives are available out of the box, and how to create custom directives.

3 - Faster GraphQL queries for mobile clients: Theo Ben Hassen - As your mobile app grows, so will your GraphQL queries. In this talk, I will go over diverse strategies to make your queries faster and more effective.

4 - Building tomorrow’s product today: Greg MacWilliam - How Shopify adopts future features in today’s code.

5 - Managing large APIs effectively: Rebecca Friedman - We have thousands of developers at Shopify. Let’s take a look at how we’re ensuring the quality and consistency of our GraphQL APIs with so many contributors.

We will enhance a full-stack JS application (Node.js backend + Vanilla JS frontend) to authenticate users with One Time Passwords (email) and OAuth, including:

- User authentication – Managing user interactions, returning session / refresh JWTs- Session management and validation – Storing the session securely for subsequent client requests, validating / refreshing sessions

At the end of the workshop, we will also touch on another approach to code authentication using frontend Descope Flows (drag-and-drop workflows), while keeping only session validation in the backend. With this, we will also show how easy it is to enable biometrics and other passwordless authentication methods.

Comments