1. Introduction to GraphQL and Resolvers

We're here to talk about GraphQL, no code, no problem, how GraphQL servers break and how to harden your resolvers. We'll discuss the current state of GraphQL servers, how programmatic resolvers fail, and how to fix them. Additionally, we'll explore declarative resolvers and demonstrate their functionality.

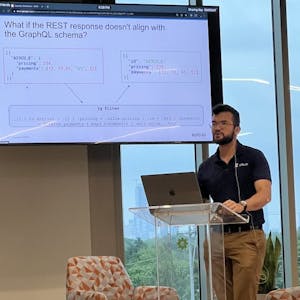

♪♪ Thank you for joining us. We're here to talk about GraphQL, no code, no problem, how GraphQL servers break and how to harden your resolvers. So, first introductions, my name's Kevin. I've been here at solo.io now for several years, working on a wide variety of projects but relevant to what we're talking about today, a large champion of our envoy in GraphQL and Envoy Filter and related projects. And we're joined here graciously today by Sai.

Yeah, hi, I'm Sai. I'm a software engineer at solo. Along with Envoy, Istio, and Flagger, I've contributed to multiple open-source projects, including Glue, and I'm here talking about GraphQL, as well. I'm one of the engineers leading the GraphQL and service mesh effort here at solo. And speaking of solo, who exactly are we? We are a startup in Cambridge, Massachusetts, and we consider ourselves industry leaders in application networking, service mesh, and modern API gateway technologies. And more recently, GraphQL, but let's continue.

So, yeah, so goals for today. We wanna talk about the current state of like GraphQL servers, specifically resolvers. How do programmatic resolvers fail? How do we fix them? Then we wanna take a concrete look at declarative resolvers, how that might work and demo it. Let's just see it in action.

2. Current State of GraphQL Servers

We have a simple mobile client making a GraphQL request to a GraphQL server. The server resolves the request for payments and plan services. There are three replicas of each service. The GraphQL server reconciles the data and returns it in a singular GraphQL response.

So getting right into it. The current state of things. I mean, this is a GraphQL conference. You know, I think we should all be familiar with this, but just to recap. So on the left here, we have a simple mobile client making a GraphQL request to a GraphQL server. This server here is resolving the request for a payments and plan service, like I think like a phone service. We have three replicas of the payments and three replicas of the plan service as delineated by the little dotted lines. This GraphQL server is resolving some fields on the payment service and some on the plan service, reconciling that back together via the schema and returning all the data on the right here in that singular GraphQL response.

3. Working of Resolvers and Handling Server Outages

We have a single resolver for the plan service, which returns a standard JSON response to the GraphQL server. We have three replicas of the plan service, randomly chosen for load balancing. In the event of a server outage, one of the replicas may return a request timeout, causing errors and incomplete data. To minimize pain, we can implement readiness and liveness probes, such as making HTTP GET requests to the service at /health and monitoring for failures.

So kind of diving into it, we have a single resolver here. So I just wanted to focus on the plan service. You can see here that it returns just like a standard JSON response to our GraphQL server and that the server will know in the execution engine how to put that back into your schema and send the response back up.

In particular, I'd like to focus on how this is kind of working a little under the covers. Like I mentioned before, we have three replicas of the plan service here. Now when we have three replicas, we're just kind of picking one at random for load balancing. To be concrete, I'll use Kubernetes as an example here, but your infrastructure has some kind of equivalent logic somewhere if you're, you know, choosing between three services of a replica to meet the scaling demands of your infrastructure.

In the Kubernetes example here, the resolver code might look like this. You have an HTTP client that tries to make a request, a GET request with query parameters to that DNS entry. In the Kubernetes example, you resolved that via DNS to get that cluster IP service on the right, on the Kubernetes service, and that cluster IP is a virtual IP that gets resolved via load balancing to one of these three IPS for pods. The key here being is that we're kind of randomly picking one of these three pods to actually hit one of these three replicas of the service.

So now if we think about server outages, let's pretend one of those three replicas goes down for whatever reason. We could say hardware failure, bug in the code that's a memory leak, deadlock, what have you. What this will mean in practice is that when the GraphQL server resolves that request, one out of those three replicas will come back with a request timeout, and then the client will see errors. The UI will get incomplete data. This is obviously bad and something we want to avoid. So yeah, like I mentioned, we wanna minimize pain. How can we do this?

Looking at an example, I know I leaned on Kubernetes before, which is, this is just to make things concrete. But again, this is just something your infrastructure probably has in some shape or another, and you just may not be aware of. In here, we're gonna look at what readiness and liveness probes do. Again, the scenarios that this is trying to resolve are primarily things like a memory leak, deadlocking your code, or even just like a hardware failure, getting things rescheduled. The crux of this is that in Kubernetes, at least you have a pod, you have a process here. In this example, we have a liveness probe, and what it's doing is it makes an HTTP GET request to your service at slash health. It waits three seconds before making the first request, and most importantly, it makes a request every three seconds. And so it's really just polling all the time. And if it starts seeing failures, it knows that your service is unhealthy. It doesn't really care the reason why, it just knows that it's unhealthy and it's probably better to restart kick the process, move it to other hardware. And again, this is the Kubernetes way, but this could be done in a variety of different ways, a watchdog, some kind of process monitor, infiltrated by puppet. And a lot of this is kind of outside of the realm of GraphQL conference, but this probably exists in some shape or form. So our goal is to minimize pain.

4. Passive Health Checking and Resolvers

Can we do more? We have a polling mechanism, but let's consider a scenario with a busy API. We can implement passive health checking by using resolver code that is instrumented with knowledge of IPs and DNS. When the GraphQL server starts, we get all the IPs behind our service and pick a random healthy IP to route requests to. If we receive a response that's not okay, we mark it as not healthy. The challenge is where to put this logic in our GraphQL server. We can explore options like GraphQL server middleware, DNS server, or a local proxy. Resolvers should ideally be light and not burdened with concerns like circuit breaking and authentication. It's better to keep these concerns local and consider using a proxy with context-aware knowledge or integrating GraphQL server with existing proxy or infrastructure like API Gateway and Service Mesh.

Can we do more? You know, we have this polling mechanism every three seconds, but let's think about the scenario where we have an API that's very busy. That's why we have to be replicas. We were receiving thousands of requests. That's pretty slow. It's gonna take us a while to, you know, inject those end points and actually serve healthy responses to our client.

So that active pulling mechanism is like an active health checking. I'm sure we've heard of maybe circuit breaking before, but another way we could solve this is with passive health checking. And so kind of a very dumb pseudo-code in the left on how this might work, just to go through it together, is let's just say our resolver code was instrumented with some knowledge of the IPs and DNS. And so what we could do is, you know, when the GraphQL server starts, we get all the IPs behind our service, we have all our replicas. Then when we resolve, we just pick a random healthy IP and we route to it. And if we happen to get a response that's not okay, then we eject it, we mark it as not healthy, and the next time we won't try. Then we can, you know, move them back to healthy after timeout, but the crux of this is, if we're receiving thousands of requests per second, we can instruct our server to say, hey, if you've got five errors in a row, like stop trying, you know? Like this end point's probably not okay, our resolver can do a lot better, be smarter, and not send requests to this replica that it knows is probably not gonna work. Now this code, obviously not production ready, you definitely don't want to instrument your resolvers with this kind of logic, but this logic does need to live somewhere. If we want to bring this into our GraphQL server, where do we put it? It could be in some kind of GraphQL server middleware behind your GraphQL server, we could try to move parts of it to a DNS server, bad for other reasons, we could use a node local proxy, another load balancer, there's a lot of options here, we can dig deeper.

Just kind of stepping back, so we looked at circuit breaking here as an example, but the problem here is that GraphQL is a single endpoint, it's a single server living on a node that has to be responsible for a lot of responsibilities before sending requests to downstream services. So if I'm operating a GraphQL server and implementing resolvers, sometimes I have to instrument them with things like failover concerns, circuit breaking, like we just talked about, a retry policy, authorization, authentication, rate limiting. You can see here on the left is kind of our ideal resolver. We wanna keep resolvers light, very simple to read and reason about. But we often see resolvers instrumented with things like on the right, here we have checks for authentication, to make sure you are allowed to access the backend. But this isn't ideal. Further, some of these concerns that we're talking about and like have to be handled on the node, like the hardware itself that the GraphQL server resides on. For example, like circuit breaking. If we wanted to move this to some other hardware, we're introducing another point of failure. More network points of failure, that's harder to reason about. It's distributed systems, it's much better if we keep it local. So we mentioned of those options, we could look at putting another proxy that has this kind of context aware knowledge on the same hardware. So you can have a GraphQL server that then routes to a local proxy and that proxy has that circuit breaking authentication awareness and routes to services behind. Or we could kind of shove them together. What if they were one thing? What if we thought of our GraphQL server as less of a server and more of like a protocol or a layer that exists in our pre-existing proxy or infrastructure? So this is kind of bleeding into the ideas that I had of API Gateway and Service Mesh. This is a little bit of kind of like Solo's bread and butter and perhaps new for a bunch of you attending a GraphQL talk.

5. API Gateways, Proxies, and Declarative Resolvers

We discuss the role of API Gateways and reverse proxies in handling network traffic. Service mesh is a newer concept using similar proxies for routing within a network. We explore the benefits of merging GraphQL servers with proxies, such as caching, authorization, and routing. Declarative resolvers are illustrated with examples of REST and gRPC requests. JQ is introduced as a templating language for constructing data during runtime. It solves the problem of GraphQL not natively working with key-value pair responses.

But the real idea here on the left, for example, is that if you think of requests coming in and out of your company, your network, usually you have something at the edge, this thing on the edge is called an API Gateway or sometimes behind a load balancer. It's responsible for things like a firewall and preventing leaks of personal data. That kind of logic is implemented in a bunch of reverse proxies. For example, NGINX, Envoy proxy, HAProxy, and then it handles complex routing, failover, circuit breaking to your backend services, whether they're monolithic microservices or cloud functions or Kubernetes clusters. Really, the only point I wanted to make is that you've got reverse proxies and they likely already exist in your infrastructure, whether you're aware of them or not.

Likewise, that handles what we'd call north-south, you know, into or out of your network traffic. On the right, we also have the idea of service mesh. This is kind of even more nascent or new, but this is using the same similar sets of proxies, so Envoy, NGINX, HAProxy, to handle routing between services within your network. So think about, like, standardized observability, metrics, tracing, as well as, you know, common use cases, just encryption everywhere, zero trust networking, and your infrastructure may have this already and you may already have Anhui proxy, you know, all next to all your services without even knowing.

So we talked a little bit about the problems that we're seeing currently with resolvers and our current deployment model and a lot of GraphQL servers where you might need to have a proxy in front of it or a reverse proxy in front of your current GraphQL server and we talked about how we can merge the GraphQL server and the proxy together. And let's talk a little bit about how this works and what are the benefits of actually doing that. So now that we have our GraphQL server as part of our proxy, we get a lot of benefits that previously came just from having proxies, just caching, authorization, authentication, rate limiting, web application firewall, as well as routing traffic, GraphQL traffic from users to the backend services. And you can also have traffic routed securely via TLS to your backend services and have secure communication within your cluster from your proxy, your API gateway to your backend services. And we talked a little bit about the fact that we could do this in code, or we can also do this as configuration. We chose to do it more as configuration. And here's an example of what declarative resolvers look like. So on the left side, we have a resolver that's resolving a GraphQL request by routing, by creating a request to a REST upstream. And on the right, we have a gRPC request being created. So we see a lot of the backbone of what exactly this declarative configuration looks like, where we're constructing an upstream request. On the left, we see a path field for actually setting the path to the REST upstream, the HTTP method, as well as some additional headers we might wanna set on the REST upstream request. And on the right, we see, for the gRPC request, we have the service name, the method name, other gRPC parameters that are needed to create an upstream request, as well as the actual reference to the upstream. In this case, this could be a Kubernetes service, a destination, an external service, or whatnot. And then we have this JQ field. We're gonna get a little more into what exactly JQ is, but essentially, it's a templating language for allowing us to construct data during runtime from our GraphQL request into the specific protocol that the upstream requires. In this case, we're constructing the path dynamically from the GraphQL arguments and the query. So let's get into a little bit about what JQ actually is, and we can best sort of frame what JQ solves by outlining a problem. And in this case, we have a schema that returns a array of review objects, and the review objects have a reviewer and ratings field. But then our upstream responds back with data that looks like this, where we have a bunch of key value pairs, and essentially a dictionary that have the reviewer name and the ratings. Now this isn't something that GraphQL knows how to natively work with. The GraphQL spec does not include this sort of data shape.

6. Using JQ for Transformation and Outlier Detection

We use JQ to transform upstream data into data that GraphQL servers can recognize. Reusing existing infrastructure and treating GraphQL as a protocol improves developer experience and reliability. In a demo, we interact with services via a GraphQL query, using the Istio ingress gateway and Envoy proxy. Some requests fail due to communication issues with upstreams. Mitigation involves using an outlier detection policy to remove unhealthy services from the endpoint pool.

So we have the problem of having to transform the upstream data into data that GraphQL servers can actually recognize. And in order to do that, we use JQ, which is an expressive transformation language. And here, we're showing that through a series of filters, we are able to transform the upstream request data or the response data into data that actually is recognized by our GraphQL schema and our GraphQL filter. I highly recommend looking more into JQ as it's an extremely powerful language for transforming JSON. Thanks Sai for jumping in with a concrete example here.

Just taking a step back, a million foot view of what we're looking to do here is really just reuse existing infrastructure. A lot of companies already have API gateways or service mesh and already have these reverse proxies implemented. Obviously leaning on your CDN for cache queries and long-lived backing services passing them through for GRPC GraphQL subscriptions. But really the sentiment is less is more. If we can get away by reusing our infrastructure and treating GraphQL as a protocol rather than a separate server altogether, then we can get improved developer experience and reliability.

Yeah, so let's look at this in practice. Let's see an actual demo of this working in cluster. So this is our Kubernetes cluster environment. On the left side, we can see a user interface for interacting with our cluster, and you can see in our cluster we have a service that's running called product page. Now we have product page version one and version two that's running. Now we wanna interact and get a response from these services via a GraphQL query, and we can do that by curling our ingress gateway, which is running right here. You can see it's the Istio ingress gateway, and that is running Envoy. If we check out the image in the manifest, you can see that it's running our Envoy proxy, and within the Envoy proxy is a GraphQL filter. So if I send a GraphQL request, which should look familiar to the Envoy proxy, you'll see that we get a response back with the data that we want. Now let's continue to add a bit of details onto this request, and we get back a response, such as this. Now you might have noticed that some of our requests are actually failing. And it's failing with this rest resolver got response status 503 from the upstream. Now this means that our GraphQL filter is not able to communicate with one of our upstreams, and this is expected. If we actually take a look at the images used in these two different deployments of the product page service, one of the images is actually a non-responsive curl image, which means that if we send any sort of traffic to it, it's not gonna respond with any data. But the other image is an actual service, our booking for a product page service provided by Istio. So what's actually happening here is that our traffic is being implicitly load balanced by Kubernetes in between the two backend services for our product page service. So how do we sort of mitigate this behavior? Well, we can use an outlier detection policy, which allows us to essentially take out the unhealthy services from the endpoint pool that the GraphQL requests are routed to in the upstream. So what does this outlier detection policy look like? It looks something like this. It says, we can apply this outlier detection policy to our services and say that if our services receive at least one consecutive error, we wanna remove that particular instance of the service from the healthy node pool, or the healthy endpoint pool. So let's go ahead and apply this outlier detection policy.

7. Resilient Resolvers and Engagement

We observed the status field being updated to 'state accepted', indicating successful processing. The bad service, product page V2, is removed from the healthy endpoint pool upon receiving a 503 error. After 60 seconds, the endpoint is added back to check if it needs to be circuit-broken again. Our resolvers are made resilient with failover, retries, and other policies. Thank you for having us at GraphQL Galaxy. Join our public Slack and follow us on Twitter for further engagement.

We can go ahead and take a look at the policy, wait for the status field to be updated, which means that our system has processed it. There we go, and we can see the status field is updated right here. That says state accepted. So now what we should see is that we can continue to curl our service, and the second it receives a 503, the bad service, the product page V2, will be removed from the healthy endpoint pool. So now the rest of our requests should be completely healthy, and you'll see that we're getting back the data that we expect.

Now there's one more thing, and that's the base injection time. That means how long the endpoint will be ejected from the healthy endpoint pool. All this says is that after 60 seconds, the endpoint will be added back in to see if it needs to be circuit-broken again, or if the endpoint is healthy again. And so that's how we've made our resolvers very resilient against services in the backend going down. I didn't demonstrate it in this demo, but we can add things like failover, retries, and other policies that make our resolvers super resilient.

And that was our demo. So on behalf of solo.io and Kevin and I, we just wanted to say thank you for having us here at GraphQL Galaxy. Please join us in our public Slack and follow us on Twitter. We're really happy to continue the discussion there and engage with you guys in the community.

Comments