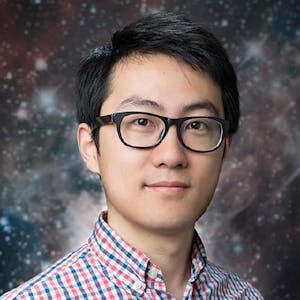

Solving your front-end performance problems can be hard, but identifying where you have performance problems in the first place can be even harder. In this workshop, Abhijeet Prasad, software engineer at Sentry.io, dives deep into UX research, browser performance APIs, and developer tools to help show you the reasons why your Vue applications may be slow. He'll help answer questions like, "What does it mean to have a fast website?" and "How do I know if my performance problem is really a problem?". By walking through different example apps, you'll be able to learn how to use and leverage core web vitals, navigation-timing APIs, and distributed tracing to better understand your performance problems.

Besides core Vue3 features we'll explain examples of how to use popular libraries with Vue3.

Table of contents:

- Introduction to Vue3

- Composition API

- Core libraries

- Vue3 ecosystem

Prerequisites:

IDE of choice (Inellij or VSC) installed

Nodejs + NPM

Comments