FAQ

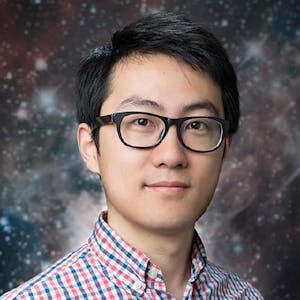

The panelists include Evan Yeo, creator of Vue.js and Vite; Sean aka Suik Suang, head of developer experience at Temporal I.O and the author of Coding Career Handbook; and Fred K. Schott, the author of Snowpack and Astro.

Next gen build tools generally adopt new emerging standards like native ES modules and dynamic imports, try to leverage as much native capabilities as possible, and incorporate tools written in languages other than JavaScript to serve the purpose of build tools.

Next gen build tools focus on leveraging native browser capabilities and emerging standards, reducing reliance on extensive external tooling. They aim for more streamlined, efficient processes that integrate more closely with modern web development practices.

Webpack remains a powerful tool with unique features like module federation, but it has tight coupling with its plugins and pipelines, which can make switching to other build tools challenging for projects deeply integrated with Webpack.

Both Vite and Snowpack provide fast development experiences by using native ES modules with hot module replacement. Vite is more opinionated, providing a pre-configured build setup, while Snowpack offers more flexibility in choosing different bundling options for production.

The major challenge is network overhead, even with advances like HTTP/2 and HTTP/3. These protocols improve multiplexing but still cannot match the efficiency of bundled code, especially during initial page loads.

While Angular is tightly integrated with Webpack, making it difficult to switch without redesigning for bundler agnosticism, it's unlikely to replace Webpack soon due to its deep integration and features like module federation.

Comments